|

P, -directory-prefix=PREFIX save files to PREFIX/. protocol-directories use protocol name in directories nH, -no-host-directories don't create host directories x, -force-directories force creation of directories nd, -no-directories don't create directories preferred-location preferred location for Metalink resources metalink-over-http use Metalink metadata from HTTP response headers metalink-index=NUMBER Metalink application/metalink4+xml metaurl ordinal NUMBER keep-badhash keep files with checksum mismatch (append. remote-encoding=ENC use ENC as the default remote encoding local-encoding=ENC use ENC as the local encoding for IRIs Specified the WGET_ASKPASS or the SSH_ASKPASS use-askpass=COMMAND specify credential handler for requesting password=PASS set both ftp and http password to PASS user=USER set both ftp and http user to USER prefer-family=FAMILY connect first to addresses of specified family,

6, -inet6-only connect only to IPv6 addresses 4, -inet4-only connect only to IPv4 addresses ignore-case ignore case when matching files/directories restrict-file-names=OS restrict chars in file names to ones OS allows no-dns-cache disable caching DNS lookups limit-rate=RATE limit download rate to RATE bind-address=ADDRESS bind to ADDRESS (hostname or IP) on local host Q, -quota=NUMBER set retrieval quota to NUMBER random-wait wait from 0.5*WAIT.1.5*WAIT secs between retrievals waitretry=SECONDS wait 1.SECONDS between retries of a retrieval w, -wait=SECONDS wait SECONDS between retrievals read-timeout=SECS set the read timeout to SECS connect-timeout=SECS set the connect timeout to SECS dns-timeout=SECS set the DNS lookup timeout to SECS T, -timeout=SECONDS set all timeout values to SECONDS S, -server-response print server response no-use-server-timestamps don't set the local file's timestamp by no-if-modified-since don't use conditional if-modified-since get N, -timestamping don't re-retrieve files unless newer than show-progress display the progress bar in any verbosity mode progress=TYPE select progress gauge type start-pos=OFFSET start downloading from zero-based position OFFSET c, -continue resume getting a partially-downloaded file no-netrc don't try to obtain credentials from. nc, -no-clobber skip downloads that would download to O, -output-document=FILE write documents to FILE retry-connrefused retry even if connection is refused t, -tries=NUMBER set number of retries to NUMBER (0 unlimits) rejected-log=FILE log reasons for URL rejection to FILE B, -base=URL resolves HTML input-file links (-i -F) input-metalink=FILE download files covered in local Metalink FILE i, -input-file=FILE download URLs found in local or external FILE report-speed=TYPE output bandwidth as TYPE. nv, -no-verbose turn off verboseness, without being quiet v, -verbose be verbose (this is the default) d, -debug print lots of debugging information a, -append-output=FILE append messages to FILE o, -output-file=FILE log messages to FILE e, -execute=COMMAND execute a `.wgetrc'-style command b, -background go to background after startup V, -version display the version of Wget and exit Mandatory arguments to long options are mandatory for short options too. GNU Wget 1.19.4, a non-interactive network retriever. Basic operation is fairly easy if you are used to command line tools. Here is the full list of Wget commands to get you started. wget -load-cookies cookies.txt -p All Wget commands Here is an example command showing how you can add the cookies file to Wget. Now you can use the cookie for as a form of credential when you attempt to download with Wget.

The file contains the authentication data needed to give access to the webpage. Save the cookie file as cookies.txt in the same folder as you intend to run wget from.

Just log in on the page using Google Chrome and download the cookie file for the site with the Chrome extension cookies.txt (or something similar). I have found that the following method works best for me. In this case, you do have to improvise a bit. Very often you will not be able to download a webpage with Wget because the webpage might be protected with login or authentication.

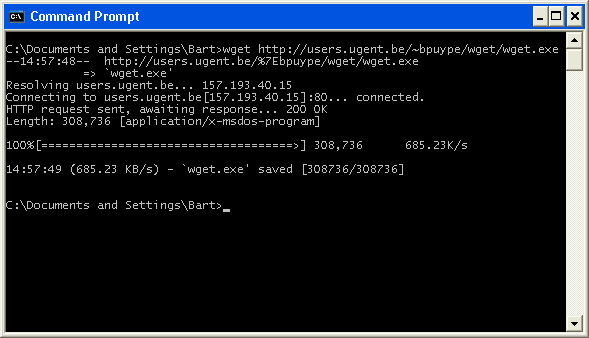

Example 2: Use Wget on protected sites or sites that use cookies Wget will download the HTML files in the same folder as “url_list.txt”. Use the command to download the links in the list: wget -i url_list.txt The file should contain multiple HTML URLs e.g.: Make a file called “url_list.txt” and place it somewhere in a folder. Example 1: Download content from a list of links If you are on a windows computer make sure that you have added Wget to PATH. I am still learning to use Wget so I might come back and update this article, but as for now, this is an easy start. It supports downloading from HTTP, HTTPS, and FTP. GNU Wget is a command-line software that retrieves content from the web.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed